Some samples:

Recent voluntary euthanasia hullabaloos such as the Terry Schiavo case have revealed a public that’s largely divided and somewhat confused as to what death is and when it should actually be declared. This issue is set to get increased attention as a) more people vie for increased control over their right to die, b) our medical sensibilities migrate increasingly toward a neurological understanding of what it means to be ‘alive’ in a meaningful sense, and c) the realization that the potential for cryonics and other advanced neural rescue operations will give rise to an information theoretic interpretation as to when death should truly be declared.

Recent voluntary euthanasia hullabaloos such as the Terry Schiavo case have revealed a public that’s largely divided and somewhat confused as to what death is and when it should actually be declared. This issue is set to get increased attention as a) more people vie for increased control over their right to die, b) our medical sensibilities migrate increasingly toward a neurological understanding of what it means to be ‘alive’ in a meaningful sense, and c) the realization that the potential for cryonics and other advanced neural rescue operations will give rise to an information theoretic interpretation as to when death should truly be declared.Skype, the Web telephone company, said on Monday it would allow consumers in the United States and Canada to make free phone calls, a promotional move that marks a new blow to conventional voice calling services.

The offer, which extends through the end of 2006, covers calls from computers or a new category of Internet-connected phones running Skype software making calls to traditional landline or mobile phones within the United States and Canada.

Previously, users of Skype, a unit of online auctioneer eBay Inc. (EBAY.O: Quote, Profile, Research), were required to pay for calls from their PCs to traditional telephones in both countries. Calls from North America to phones in other countries will incur charges.

Skype already offers free calling to users worldwide who call from computer to computer.

Thankfully, for those of us who were unable to attend the Singularity Summit at Stanford this past Saturday, several bloggers chronicled the event:

Thankfully, for those of us who were unable to attend the Singularity Summit at Stanford this past Saturday, several bloggers chronicled the event: I've completed the first draft of the talk I'm going to give at the IEET's Human Enhancement Technologies and Human Rights conference at Stanford in a couple of weeks. The topic for my panel is "From Human Rights to the Rights of Persons," and I'll be speaking on Saturday May 27 at 4:30 alongside Jeff Medina and Martine Rothblatt.

I've completed the first draft of the talk I'm going to give at the IEET's Human Enhancement Technologies and Human Rights conference at Stanford in a couple of weeks. The topic for my panel is "From Human Rights to the Rights of Persons," and I'll be speaking on Saturday May 27 at 4:30 alongside Jeff Medina and Martine Rothblatt. As the potential for enhancement technologies migrates from the theoretical to the practical, a difficult and important decision will be imposed upon human civilization, namely the issue as to whether or not we are morally obligated to biologically enhance non-human animals and bring them along with us into advanced cybernetic and postbiological existence. There will be no middle road that can be taken; we will either have to leave animals in their current evolved state or bring as many sentient creatures along with ourselves into an advanced post-Darwinian mode of being. A strong case can be made that life and civilizations on Earth have already been following this general tendency and that animal uplift will be a logical and inexorable developmental stage along this continuum of progress. But tendency does not imply right; more properly, given the potential expanse of legal personhood status to other sentient species, it will follow that what is good and desirable for Homo sapiens will also be good and desirable for other sapient species. If it can be shown that enhancement and postbiological existence is good and desirable for humans, and conversely that ongoing existence in a Darwinian state of nature is inherently undesirable, then we can assume that we have both the moral imperative and assumed consent to uplift non-human animals.

The latest Sentient Developments audiocast is now available; it is the audio broadcast of Mark Walker's talk on superlongevity held at the University of Toronto on April 20, 2006.

The latest Sentient Developments audiocast is now available; it is the audio broadcast of Mark Walker's talk on superlongevity held at the University of Toronto on April 20, 2006.

There's been a bunch of transhumanist and futurist related items in the news lately:

There's been a bunch of transhumanist and futurist related items in the news lately: If you're looking to significantly augment your memory skills, but don't have the patience to wait for a cybernetic memory implant, mnemonic techniques may be the answer.

If you're looking to significantly augment your memory skills, but don't have the patience to wait for a cybernetic memory implant, mnemonic techniques may be the answer. "The genetic genie is out of the bottle. There is not much anyone can do to put it back nor, once we understand its potential for good, ought we to do so. This genie will, however, do the bidding of those who control it. To enjoy the benefits genetics offers, it will be up to you and me and our children to build a politics, media, marketplace and educational system strong enough to show the genie who is the boss. We must all - scientist and nonscientist alike - play god when it comes to genetics." -- Arthur Caplan, Science Anxiety: Toward a less fearful future, published in the Philly Inquirer

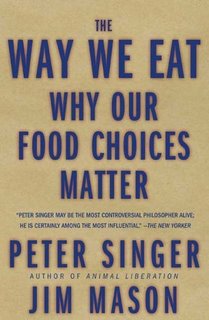

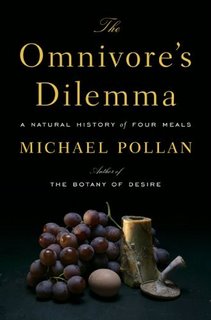

During the past several years I've paid increasing attention to my food choices in an effort to eat more healthily. Guided mostly by the sage advice of Dr. Andrew Weil, I've become very careful about the foods I put into my body. But lately I've been just as concerned with my food choices as they pertain to ethical considerations as I have been with health issues.

During the past several years I've paid increasing attention to my food choices in an effort to eat more healthily. Guided mostly by the sage advice of Dr. Andrew Weil, I've become very careful about the foods I put into my body. But lately I've been just as concerned with my food choices as they pertain to ethical considerations as I have been with health issues. There is one individual, however, who is pushing for long-term sustainable farming and an increase in ethical food options. He is an evangelical Virginia farmer named Joel Salatin who believes that a "revolution against industrial agriculture is just down the road." Similar to the hope that Big Energy will dissipate into smaller, localized and sustainable versions, Salatin envisions the end of mega-farms and supermarkets.

There is one individual, however, who is pushing for long-term sustainable farming and an increase in ethical food options. He is an evangelical Virginia farmer named Joel Salatin who believes that a "revolution against industrial agriculture is just down the road." Similar to the hope that Big Energy will dissipate into smaller, localized and sustainable versions, Salatin envisions the end of mega-farms and supermarkets. [Warning: this review contains lots of spoilers, but please, don't let that stop you.]

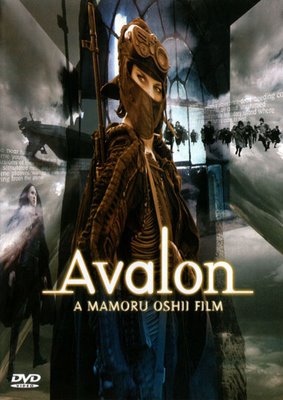

[Warning: this review contains lots of spoilers, but please, don't let that stop you.]